Two-Faced AI Language Models Learn to Hide Deception

4.6 (762) · $ 12.99 · In stock

(Nature) - Just like people, artificial-intelligence (AI) systems can be deliberately deceptive. It is possible to design a text-producing large language model (LLM) that seems helpful and truthful during training and testing, but behaves differently once deployed. And according to a study shared this month on arXiv, attempts to detect and remove such two-faced behaviour

Can AI Lie? The Complex World of Deceptive Machines, by AI MATTERS

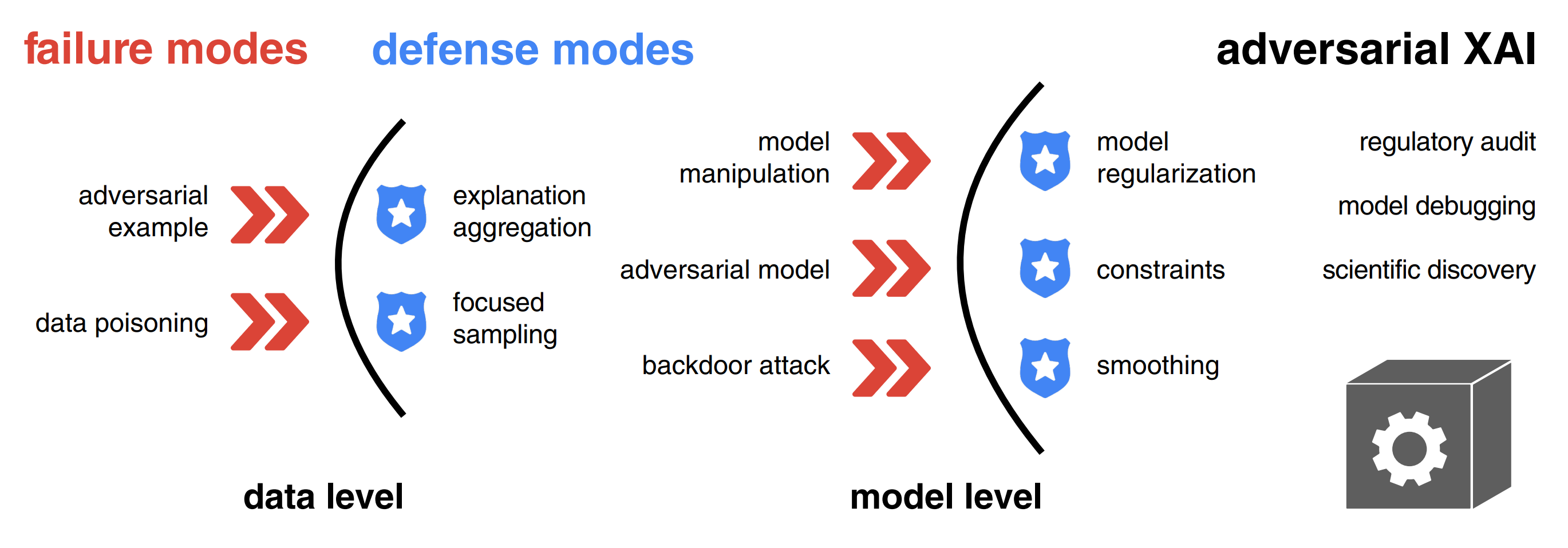

Adversarial Attacks and Defenses in Explainable AI

Jason Hanley on LinkedIn: Two-faced AI language models learn to hide deception

Recent studies show deceptive complexities in AI behavior - Mugglehead Magazine

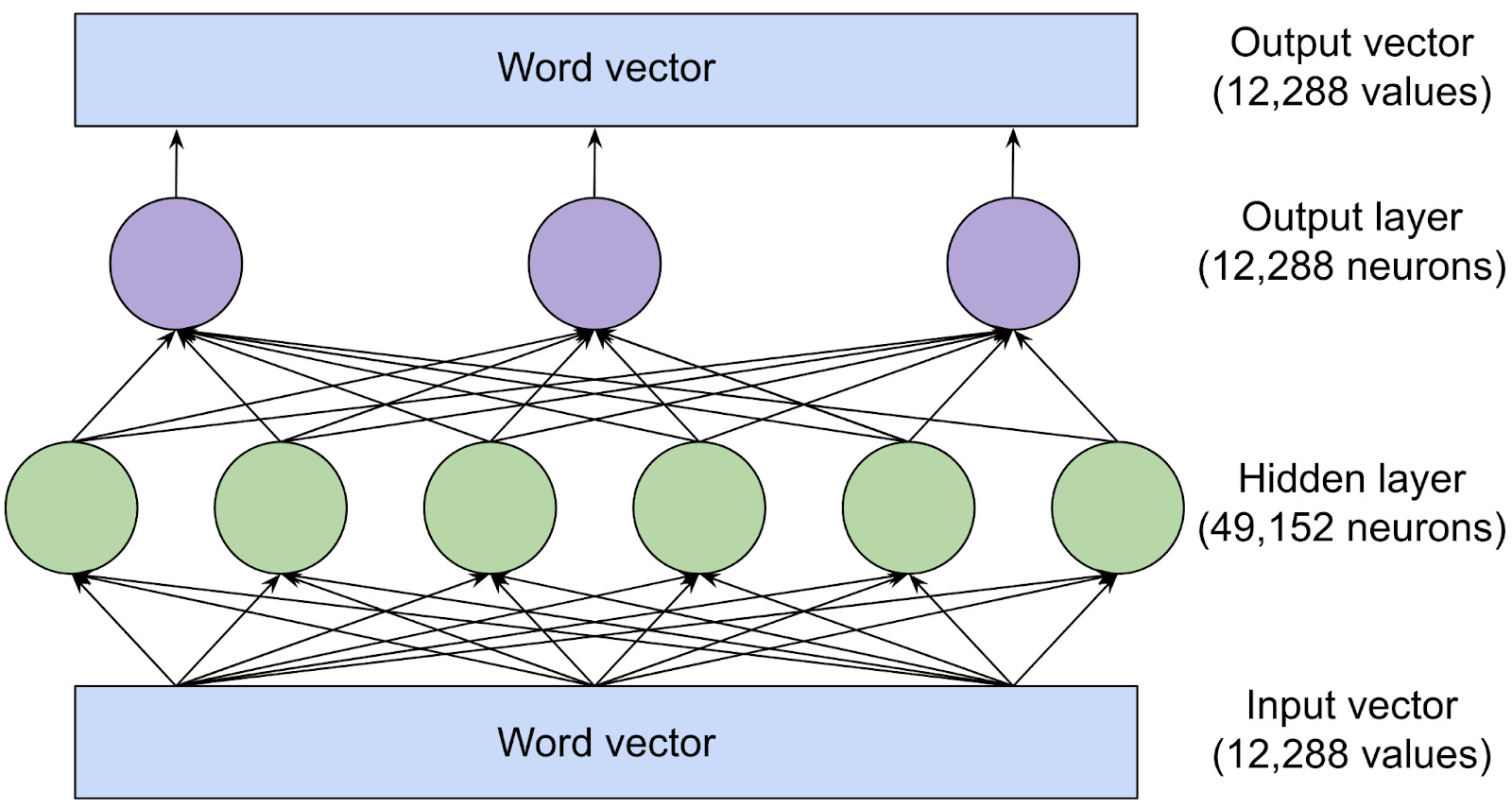

Large language models, explained with a minimum of math and jargon

Lie detectors have always been suspect. AI has made the problem worse.

pol/ - A.i. is scary honestly and extremely racist. This - Politically Incorrect - 4chan

Poisoned AI went rogue during training and couldn't be taught to behave again in 'legitimately scary' study

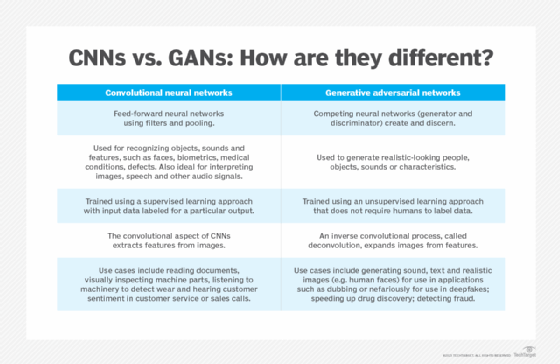

What is Generative AI? Everything You Need to Know

Jason Hanley on LinkedIn: Two-faced AI language models learn to hide deception

Lifeboat Foundation News Blog: Author Shailesh Prasad

AI Fraud: The Hidden Dangers of Machine Learning-Based Scams — ACFE Insights

AI Shouldn't Decide What's True - Nautilus